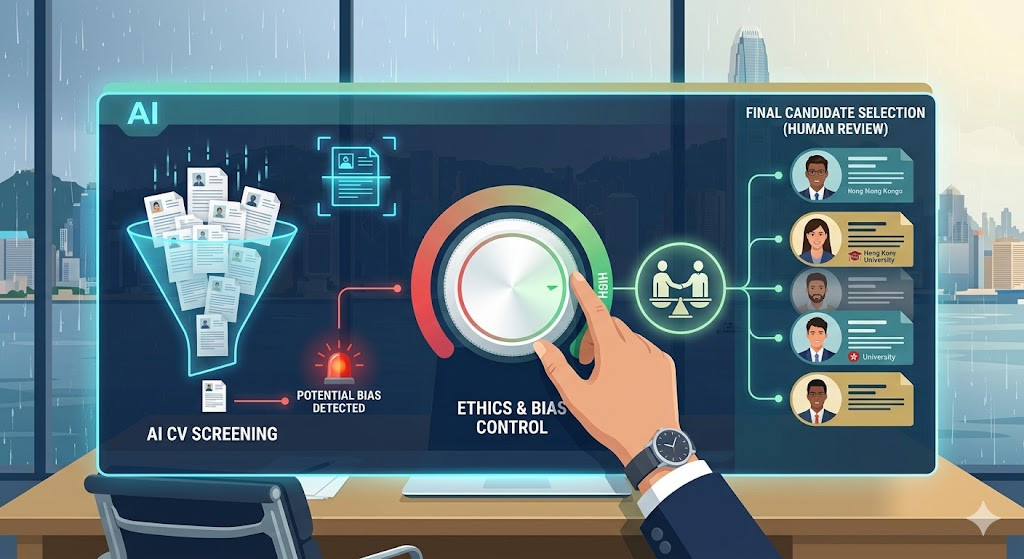

In 2026, almost every company uses AI to scan CVs. However, recent guidelines released by the HKMA (Hong Kong Monetary Authority) have many HR professionals concerned: if an AI automatically rejects a candidate simply because they hold a local degree rather than one from an Ivy League school, the company could be held legally liable.

Why should you care?

AI bias is often invisible. If the data fed into the AI is skewed toward a specific demographic, the AI becomes a "digital gatekeeper." The HKMA now requires financial institutions to maintain a "Human-in-the-loop"approach—meaning critical hiring decisions cannot be 100% automated; there must be a record of human review.

HR Strategy Points:

- Algorithmic Audit: Conduct an annual audit of your algorithms to see if the AI’s selections are becoming too homogenous.

- Explainability: If a candidate complains, does your company have a way to explain why the AI rejected them?

- Local Context: Ensure the AI understands the prestige of "The Big Three" or specific local certifications; don't let a "Western-trained" AI stifle local talent.

References:

- HKMA: Circular on Artificial Intelligence in Financial Services (2026)

- Privacy Commissioner for Personal Data: AI Ethical Recruitment Framework